1. Introduction

Democracies are messy. They are constituted not just (or even primarily) by rules and principles written into laws and constitutions, nor by the formal organizational structure of organizations and governments. They are not comprised merely of articulated rights and duties, or even values. Democracies function as complex adaptive systems characterized by dynamic interactions between multiple independent actors. They are “dancing landscapes” as described by Kauffman (1993)—constituted by the concurrent adaptation of numerous independent agents responding to and anticipating behavioral patterns of others in their environment. Democracies embrace complexity rather than imposing order through authoritarian means, rejecting the order achieved by dictators and tyrannical rule in favor of the living order achieved by actors who, in theory and in aspiration, are free to make a wide variety of choices (including choosing or rejecting leaders, laws, and legal outcomes) without fear of retribution. Democracies are performed and experienced, residing in the myriad interactions that make up an ordinary life. The institutions of democracy, such as independent courts, do not only function to investigate and declare when rules have been broken, they also function as democratic spaces where “people’s relationships as civic equals are enacted” (Hadfield and Ryan, 2013). Even the hardest of hard laws—clear rules set out in incontestably valid legal texts—depend on the dance: the voluntary cooperation and conformity of police and judges and presidents (Basu, 2000). And because decisions about cooperation and conformity are based on what any one individual thinks others will do, “the state is nothing but a set of beliefs” (Basu p. 8); “law enforcement just is a social convention, and respect for the law just is a social norm” (Sugden, 2004).

The emergence of powerful generative AI brought with it, almost immediately, attention to the question of how to build AI of and for democracy. This question presents significant technical challenges for computational systems that must operate within democratic contexts. How will democratic values be represented in AI systems and agents? How will we ensure that AI systems and agents behave in ways that support and implement democracy and the rule of law? Current technical approaches to democratic alignment primarily focus on preference aggregation and rule encoding. We have seen efforts to collect “democratic inputs” for fine-tuning large language models (OpenAI, 2023; Huang et al., 2024; Bergman et al., 2024); sophisticated fine-tuning technologies to implement “constitutional AI” (Bai et al., 2022); calls for AI alignment to be grounded in methods that elicit and aggregate pluralistic values (Sorensen et al., 2024; Conitzer et al., 2024); and calls for “law-following” AI (O’Keefe et al., 2025). These approaches implicitly assume that democratic values can be exhaustively elicited and encoded as static parameters, that democracy resides in abstract principles and the aggregation of preferences for collective decision-making. While all of these approaches are meritorious and worth pursuing, they fail to address the computational challenges of participating in evolving normative contexts. None of these approaches takes on board the fact that democracy is a dynamic complex adaptive system, constituted by behavior and beliefs. In a world with increasingly autonomous AI, we have to ask: how might AI systems and agents disrupt, distort, or even destroy the delicate dance of democracy? Any effort, even democratically inspired ones, to elicit and encode human values, preferences, laws, or norms in AI systems will almost surely fail—and fail not only to be democratic, but more fundamentally to protect the stability of democracy. The AI transition we are facing is one in which the multiplicity of mundane daily interactions in which we engage, with other people, with organizations, with governments and their officials, will also be engaged with by AI agents. If we are to believe the companies pouring billions into building the agentic era, agents will soon enough be making decisions about what products to build and what companies to invest in, how to help students learn, whom to hire and fire, whom to charge with or convict of a misdemeanor, whether to grant or deny a claim for insurance or public benefits, what to say on social media, how to allocate public dollars, and even what public policies to implement. AI agents will be minutely woven into daily life, into the dancing landscape.

Democracy is ultimately an alignment process. Though theoretically reducible to preference aggregation functions, practical democratic processes resist complete static specification. Democracy weaves together the daily decisions of millions of individuals, with their own goals and interests, beliefs, and commitments, to align their behavior with collective well-being, a concept that is itself organically grounded in the idea of equal worth and dignity for all and yet subject to constant contestation and evolution. We argue that for maintaining democratic resilience during the AI transition, it will be essential to build AI agents that are capable of forming the beliefs and choosing the behaviors that mirror those of the human agents that constitute human democracies. They will need to operate within the democratic matrix, taking here the concept of a matrix not from mathematics and the internal structure of neural networks but from the Latin origins of the word ‘womb’ to mean the context and structure that nurtures life and development. AI must be aligned, not with individual preferences or laws and norms per se, but equipped with the computational capacity to interpret and participate in the complex processes that generate democracies as our shared normative social order (Hadfield and Weingast, 2014): our context, our matrix. This requires building mechanisms that allow an AI system to make decisions in the moment, responsive to a particular normative context, rather than solely relying on pre-trained value alignment. It requires us to build AI systems that possess normative competence, which we define as the computational ability to detect social sanctions, attribute them to specific behaviors, and adjust future actions accordingly (Hadfield, 2025). It requires AI systems and agents that are incentivized to recognize, comply with, and participate in the distributed enforcement of rules and norms. In addition, we will need to build normative classification institutions that are legible to AI and operate at the speed and scale of AI.

Our paper proceeds as follows. In Section 2 we present our theoretical framework, drawing on a microfoundational account of normative social order due to Hadfield and Weingast (2012, 2014). In Section 3 we connect the concept of normative competence to Adam Smith’s theory of the impartial spectator—the internal representation he proposed a moral person uses to assess their behavior as if viewed by a neutral observer (Smith, 1976). Together Sections 2 and 3 ground our theoretical claim that democracy rests on the capacity of individual agents to evaluate their behaviors with reference to a socially constructed and dynamic context. We turn in Section 4 to our assessment of the implications of autonomous AI agents participating in the economy—and why such participation will impact the performance of our democratic social orders. Without the capacity to predict dynamic democratic norms and values and take actions that implement these norms and values in mundane economic tasks, we argue, AI agents risk destabilizing our democratic regimes. Section 5 then sets out a technical research agenda that identifies technical mechanisms to build normative competence into AI agents and digital institutions that support democratic alignment for AI agents. We provide a combination of concrete proposals and broader considerations for systems that support voluntary participation in and maintenance of an evolving, democratic, normative social order.

2. Democracy as Normative Social Order

Most accounts of democracy are either prescriptive or descriptive. Prescriptive accounts tell us what norms and values a democratic society should possess: people should have rights of due process and free speech; they should be treated with equal dignity and autonomy; and, most important, they should have the collective power to choose their leaders and laws and control the exercise of state coercion (Pennock, 1979). Descriptive accounts tell us what features and institutions democracies, old and new, do possess: they hold elections; they enact laws overseen by independent judges; they require their rulers to seek consent from a council or assembly of citizens (Stasavage, 2020).

We take a different approach, grounding our framework for thinking about how to build AI for the democratic matrix in Hadfield and Weingast (2012, 2014)’s theory of normative social orders. A normative social order is an equilibrium state in which a group of independent actors pursuing ordinary self-interested utility (i.e., not innately motivated by pro-social preferences) are incentivized and coordinated to take costly efforts to punish members of the group who engage in behaviors classified as punishable (disapproved, inappropriate, wrongful) by a classification institution. A normative social order then displays a reliable, stable set of behaviors that are patterned on the shared classification scheme produced by the classification institution: consistent with self-interest, agents avoid the behaviors that are predictably punished. This is what any descriptive or prescriptive theory of democracy is appealing to: a stable pattern in which people and entities (like governments) can be counted on to (mostly) reliably do things like respect due process or treat people of different identities with dignity and respect. Securing that stable pattern means establishing a particular kind of normative social order.

We use this framework to think about AI agents and democracy because, in principle, it makes no assumptions about the nature of the agents in a given society. It does not endow them with uniquely human attributes; it merely presumes these agents are rational maximizers. And it does not presume particular structures of human interaction; it asks only the general question of how a community of maximizing agents can live productively together. This provides theoretical grounding to understand normative properties of AI agents and their integration into specific types of normative social orders, such as democratic normative social orders.

Most theories of norms focus on the challenge of getting agents to comply with norms. But a key innovation of the Hadfield and Weingast (2012) model is to focus attention not on compliance but punishment: how do we get ordinary agents to punish, and how do we get them to punish the same behaviors? Punishment in this context refers to specifically third-party punishment, a uniquely human behavior that distinguishes us from other mammals (Tomasello and Vaish, 2013). It includes any response that imposes costs on others’ behavioral choices: mocking, criticism/disapproval, exclusion from cooperation, and ostracism from the group, not just the imposition of physical injury or material loss. Third-party punishment is different from second-party retaliation or reciprocity, where an agent imposes costs on another agent based only on their own sense of right and wrong; with third-party punishment agents punish on the basis of the classifications announced by the classification institution. But a regime in which third-parties tolerate second-party retaliation, but only when retaliation is consistent with a shared classification scheme (Bhui et al. 2019) would constitute, in this framework, a third-party punishment regime.

If the punishment problem is solved, then compliance is solved: people avoid punished behaviors. This approach to modeling a normative system emphasizes that norms are the output of an interactive system, produced in equilibrium by the behaviors of agents. This is why Hadfield and Weingast (2012) use classification—a labeling of behaviors as punishable or not—as a primitive concept, rather than norms or rules. Norms and rules imply a lot of structure; they imply the existence of both classification and expected consequences or beliefs in the event of a violation. Moreover, the nature of rules and norms is what we are trying to explain and understand. The Hadfield-Weingast model separates out these elements so we can see how norms and rules are, or are not, produced in equilibrium.

Hadfield and Weingast focus on informal decentralized third-party punishment—delivered voluntarily by ordinary group members—as opposed to formal centralized government enforcement for both empirical and methodological reasons. Empirically, the great majority of human history has been characterized by the absence of a centralized enforcement apparatus (Hadfield and Weingast, 2013) and even in our modern states with government policing powers, many legal rules are enforced, if at all, through decentralized efforts. In some cases (such as the international legal order or in poor countries) this is due to the absence or incapacity of the state. In other cases, formal enforcement is supplemented by informal enforcement, such as when commercial parties enforce contracting norms by refusing to do business with actors who get a reputation for breaching their contracts (Milgrom et al., 1990; Hadfield and Bozovic, 2016). Methodologically, a focus on voluntary participation in punishment efforts is critical for the reasons highlighted by Basu (2000): even the elaborate centralized enforcement apparatus we associate with modern states ultimately depends on the voluntary behavior of individual actors. If the police won’t arrest, prosecutors won’t prosecute, judges won’t rule, presidents won’t abide by court orders, legislators won’t object, and voters won’t toss from office, then formal enforcement of the rules doesn’t happen. So, while we’ll focus on decentralized enforcement, nothing in the model precludes analysis of the role of centralized enforcement.

What incentivizes voluntary participation in costly third-party punishment? This is a challenging open question in the behavioral sciences. A taste for punishing wrongdoers may be innate among humans (Fehr and G¨achter, 2002; Fehr and Fischbacher, 2004; Boyd et al., 2003). But it may also be that the obligation to punish wrongdoers is itself a norm that is enforced by third-party punishment, a bootstrapping of the enforcement mechanism (Henrich and Boyd, 2001; Henrich, 2004), or (as Hadfield and Weingast (2012) suggest) a means of signaling ongoing support for, and avoiding the collapse of, a normative social order. These latter mechanisms rest only on rational choice and not on innate human psychology.

If agents can be incentivized to punish, the next question is: how are they coordinated to punish the same things? The Hadfield and Weingast (2012) model here focuses attention on the classification institutions in a given group. In the modern democratic state, classification is often performed by institutions that also possess centralized enforcement authority, or at least the capacity to call on centralized enforcement. Legislatures write laws and they can fund or command a police force. Courts can declare wrongdoing and order private or public actors to take steps to punish a wrongdoer. But the theoretical framework we employ here keeps these functions distinct for analytical purposes.

A classification institution produces a shared classification scheme for a group of agents. To achieve normative social order, this scheme must be unique. In any group of individuals, there may well be multiple sub-groups with classification institutions and schemes, each of which governs behaviors in some domain. In today’s complex societies, for example, each of us belongs to many different groups: households, schools, workplaces, religious groups, political parties, online communities, municipalities, nations, and more. Each of these can have its own classification institution, and we have classification institutions that govern the jurisdiction of these sub-groups. The federal constitution, for example, protects the freedom of religious groups to determine some of the norms governing their members but not all: they can shun members who dress immodestly, engage in premarital sex, or fail to show charity to the poor, but they cannot physically abuse children or violate federal anti-discrimination laws.

A classification institution may be entirely implicit, in the sense that the only way to determine the group’s shared classification scheme is through observation: in a given society or group, we can see which behaviors are reliably chosen and not punished (indicating that they are classified as non-punishable) and which (otherwise self-interested) behaviors are reliably avoided or, if they do occur, are reliably punished. We might also investigate classifications in such a setting by asking members of the group whether a particular behavior is allowed or not; Bicchieri (2005) emphasizes that a society’s norms may depart from observed behaviors for a variety of reasons but could show up in shared beliefs. We use the term “institution” here in the same sense that social structures like kinship are thought of as institutions or as North (1990) uses the term, to refer to the “rules of the game in a society.” When classification is entirely implicit, articulated only in behaviors and beliefs, we can think of the normative social order as ‘culture.’

There is reason to believe that through much of human evolution, when humans lived in relatively small hunter-gatherer bands, classification institutions were entirely implicit. Although we only have the evidence of surviving foragers to go on, anthropologists are largely agreed that the earliest human societies were deliberately egalitarian, lacking formal leadership structure and classifying ‘big-man’ behaviors as disapproved (Boehm et al., 1993; Smith et al., 2010). Classification (merged with punishment in the form of mocking and criticism) occurs in these communities through group discussion (Wiessner, 2005, 2014). But as societies grow, through successful exploitation of the capacity for social surplus generated by specialization, the division of labor, and exchange, reliance on purely informal, emergent, methods of classification come under pressure. A greater variety of behaviors, backgrounds and beliefs generates ambiguities, gaps, and conflict over classification. There is what Hadfield (2017) calls a “demand for law”: a demand for an identifiable entity that can resolve ambiguity, fill gaps, and resolve differences to achieve a unique, shared classification scheme that can coordinate group punishment.

For an identifiable entity to serve as a group’s classification institution—at least over some domain —it must be able to successfully coordinate third-party punishment that is conditioned on the institution’s expressed classifications. Hadfield and Weingast (2012) show that for such a stable equilibrium in punishment behaviors to be achieved, the classification institution must possess several attributes, which they call “legal attributes.” These attributes include features such as stability, generality, clarity, neutrality, and impersonal reasoning. Strikingly these attributes closely track the characteristics that legal philosophers (Fuller, 1964) have long identified as constitutive of the rule of law, a core feature of democratic social orders. In the Hadfield-Weingast framework, however, the justification for the importance of these attributes to a democracy is not fundamentally prescriptive in the sense that these are good attributes to have because they achieve the good of the rule of law. Rather, these are good attributes to have because they secure the stability of a normative social order coordinated on a shared classification scheme.

Stable normative social order is essential for any human group to function. This puts pressure on a group to maintain the stability of its classifications. Stable classification allows members of the group to reliably predict which behaviors they should punish and which they should avoid, as they form and execute on their daily and long-term plans. But in a dynamic environment, with variance and evolution in circumstances, group membership, and understandings of the world, stability is not an unalloyed good. Adaptation is also good—to address novel behaviors and events, to reconcile newly divergent interests, and to implement new ideas about how best to govern the group. A thriving community balances stability and adaptation (Mathew et al., 2025). And this is a key value of distinctively legal order with an identifiable entity engaging in stewardship of classification: in a stable legal order, when the classification institution respects the constraints on its behavior (the legal attributes), a group can modify its classifications without disrupting its normative social order. We take this for granted in our formal legal orders: the legislature can pass new laws and courts can issue new rulings and, within limits and after some period of adjustment, life goes on as normal. Yesterday we didn’t require people to wear seatbelts in their cars or businesses to provide service to customers of all races; today we do.

And so what is a democratic social order in this framework? It is one in which the overarching classification institution reflects basic democratic values, such as the equal worth and dignity of all, and is open to hearing from and responsive to the members of the group in the robust sense that the institution is ultimately shaped or controlled by them. Stasavage (2020) documents the presence of democracy—which he defines as a political system in which “those who rule [are] obliged to seek consent from those they govern”—over millennia, from indigenous councils with broad participation in North America and Mesoamerica, to ancient Mesopotamia and India and precolonial Central Africa. Stasavage envisions environments like those modeled in Hadfield and Weingast (2012), in which ordinary individuals have to voluntarily choose to support the decisions of a governing entity for those classifications to be effective because the governing entity (the classification institution) lacks coercive power. If people don’t like the decisions of a ruler, they can refuse to cooperate or leave. In modern democracies, the power to control the classification institution is subject to the power of the electorate to choose who will wield the authority to say what is and what is not allowed—through legislation, court decision, and executive action. And more so than in early democracies, modern democracies are grounded on the rule of law—the idea that the exercise of authority to govern, to control the classification institution, is subject to the constraints imposed by a legal order, what Hadfield and Weingast (2012) call legal attributes.

Ultimately, the core of democracy is the capacity of the people acting as a collective to reshape their classification institutions or build entirely new ones. A constitution establishes rules for changing a democracy’s institutions. And constitutions themselves can be rewritten and reinvented.

3. The Normatively Competent Agent and Adam Smith’s Impartial Spectator

In our framework democracy is fundamentally the product of myriad actions taken and beliefs entertained by the members of a group—a village or camp in early human societies, a city or a state or a nation in today’s complex societies. It requires individuals on a regular basis to think about what the democratic norms of their society expect of them—what they do and what they criticize in others. A democracy is upheld and instantiated by individuals who take offense and perhaps act out when people are denied free speech or due process, who recognize their obligation to treat others with dignity and respect, who expect their representatives to show fidelity to their constituents and the law, and who vote against them if they do not. It is not merely that individuals are obedient to democratic norms; it is that without their individual and daily responses to what they see and hear around them, there is no democracy. Democracy is produced in a democratic matrix, constituted by the actions and beliefs of all the individuals who reside together in a society.

Think about what the individuals who constitute a democracy must be thinking. They have to be thinking on an ongoing basis, sometimes more overtly, sometimes less so, about what is and what is not appropriate behavior in their democratic community. They must possess the cognitive ability to take in information about normative classifications. They must recognize and pay attention to the normative institutions in their community, the sources of authoritative classifications that resolve uncertainties and disputes. They must attend to normative behaviors, specifically the punishment behavior of other agents, both to assess whether announced or prior classifications/institutions are (still) effective and the normative social order (still) stable and to evaluate the willingness of other individuals to enforce classifications.

All of this thinking, all of this cognitive work, amounts to what Hadfield (2025) calls “normative competence.” Being normatively competent means something more than knowing what ‘the norms’ or ‘the laws’ are: it means the general capacity to read a dynamic normative environment and predict both its current content and its trajectory.

Adam Smith introduced a metaphor for the cognitive capacity of normative competence: the “impartial spectator” (Smith, 1976). For Smith, the essence of morality was the idea that we each carry around inside us an imaginary observer to whom we can appeal as we consider how to behave. We imagine the response of a fair-minded individual, “impartial and well-informed,” who judges our conduct against the standards of the community (Smith, 1976). This is not mere prediction of actual censure from others; it is a constant assessment of what actions would merit censure. In Smith’s thinking, a moral person looks not to avoid actual blame, but to avoid blame-worthiness, doing anything that “though it should be blamed by nobody, is, however, the natural and proper object of blame” (Smith, 1976).

The impartial spectator is not a passive capacity; it represents the use of cognition to direct attention to the behavior and assessments of others, evaluated not as mere data, but as inputs to the reasoning that appears to guide appropriate judgment in society. This is what we mean by normative competence. Moreover, Smith’s moral agents do not merely engage in cognition; they also are motivated to engage in upholding the normative social order. In our framework, they actively condition their conduct on the avoidance of punishment and they are incentivized to participate in punishment that is guided by the complex assessment of what is appropriate. These agents are not merely normatively competent; they are also normatively compliant.

Smith’s metaphor is a powerful one for thinking about what it will take for AI agents to be competent participants in democratic social orders. It will not be sufficient for them to be given democratic rules and principles and to be able to reason about them, as alignment techniques such as Constitutional AI (Bai et al., 2022) propose. Smith himself was writing against rationalist philosophers who saw “moral action [as] functions of reason, an understanding of necessary truths analogous to mathematical thinking” (Raphael, 2007, p. 6). Smith’s moral actors are fundamentally grounded in social interaction and shared perception, in the dynamics of actual, fluid, societies. They are active participants in upholding and enacting a shared sense of what is good for society. Their fundamental orientation to self-interest (which Smith of course elsewhere argued was the driver of the benefits of markets (Smith, 1776)) is reined in by the orientation to normative judgment, of self and others, according to shared standards and the willingness to uphold the social order without which markets cannot function.

In the next section, we lay out our claim that AI agents, even though built to engage in ordinary economic activity, will consistently be faced with decisions that implicate democracy. They will become actors in the democratic matrix. To do this without destabilizing democratic social order will require, we argue, that AI agents possess normative competence, an implementation of the digital equivalent of an impartial spectator, and that they have access to the digital equivalents of the normative institutions that support democratic social orders so that they too can understand what their shared dynamic context considers to be right behavior.

4. AI Agents as Actors in the Democratic Matrix

The canonical definition of an AI agent is from Russell and Norvig (2009): “An agent is anything that can be viewed as perceiving its environment through sensors and acting upon that environment through actuators.” According to their definition, any AI system is an agent, including large language models (LLMs) implemented in chatbots that just take in text from a human (a prompt) and output text (a completion). These kinds of agents can impact the world—how markets, politics, and social systems work—through their impact on the beliefs, and hence the behaviors, of humans. Chatbots can persuade humans to believe things and so alter their voting behavior or influence emotions to cause humans to submit comments to a government agency supporting or opposing a proposed policy. But they can’t directly act on voting machines or submit comments.

When we use the term “agent” we are appealing to a use of the term that goes well beyond chatbots and became the buzzword among AI developers in 2025. This is when we heard CEOs saying things like “2025 is the year of AI agents” and “Gemini 2.0 [is] our new AI model for the agentic era.” What these people mean by “AI agents” are AI systems that are capable of taking in general instructions from a user, producing a plan for how to act on those instructions in a potentially wide and uncontrolled environment, and then taking autonomous actions in the world—going beyond outputting text to a user. OpenAI has articulated five stages of AI, beginning with chatbots in stage one, reasoners in stage two, and agents in stage three—meaning “AI systems that can spend several days takings actions on a user’s behalf” (Metz, 2024). Mark Zuckerberg predicts a future in which there are “hundreds of millions, billions, of different AI agents, eventually probably more AI agents than there are people.”

Mustafa Suleyman, a co-founder of DeepMind, wrote an article in July 2023 shortly after he assumed the role of CEO at Microsoft AI arguing that in the agentic era, we will need a new Turing test (chatbots having blown through the original one) (Suleyman, 2023). His “Modern Turing test” is instructive for thinking in a fine-grained way about what AI agents might mean for the democratic matrix:

Put simply, to pass the Modern Turing Test, an AI would have to successfully act on this instruction: “Go make $1 million on a retail web platform in a few months with just a $100,000 investment.” (Suleyman, 2023)

Let’s think carefully about what an agent that can pass this test might be doing in the world:

- researching consumer product markets, perhaps by commissioning a market survey firm or launching a survey on MTurk;

- designing a product;

- contracting with a manufacturer (perhaps in another country) to produce initial prototypes;

- developing and launching a marketing and pricing strategy;

- researching any consumer product regulation or guidance that might apply, adjusting design or marketing choices to comply (or not) with requirements, possibly seeking certifications of product safety, submitting any required regulatory documents;

- conducting focus groups or A/B tests to validate design and marketing choices;

- entering into supply contracts, possibly with foreign suppliers;

- contracting for logistics and warehousing, including terms governing risk allocation and insurance;

- designing a page for the retail web platform and contracting for the platform’s services and fees;

- arranging for orders to be received and fulfilled; responding to customer reviews, questions, and complaints;

- hiring any humans that might be needed to accomplish particular tasks, whether for functional reasons or because consumers will pay more and buy more if they can engage with a human at some point; and

- adjusting strategy to shifts in the market environment, consumer sentiment, and competitor behavior.

At multiple points, these activities also imply engaging with financial institutions—banks, lenders, payment systems (possibly international), insurers—and legal regimes—contract law, consumer protection law, intellectual property law, environmental regulation, customs and trade law, etc.

Our framework emphasizes that it is in the daily transactions of life—such as those described above for the ‘simple’ task of successfully building a small business on a retail web platform—that much of the work of enacting democracy happens. All of the decisions the AI agent loosed on the world makes, like those made by any human agent, are embedded in the normative social order. That order consists of norms such as those governing what colors or symbols it is appropriate to use in product design, what language to use in marketing or communications with customers, and how to price to discriminate between customers or responds to unusual events like shortages or natural disasters. There are laws governing price coordination with competitors, product design and safety, the use of bank accounts, payments to foreign suppliers, representations made to regulators, claims made in advertising, and choices about whom to sell to and whom to hire. In a democratic social order, these laws and norms reflect democratic values (such as non-discrimination in hiring and the provision of goods and services; respect for individual autonomy; privacy; and freedoms of speech, religion, and association) and they implement the policies chosen by a democratic government.

The ways in which the behavior of an AI agent can impact the democratic social order are even more apparent if we imagine an agent engaged in non-commercial activities, for example:

“Go design and execute personalized learning programs for high school social studies students over the semester using tailored interviews and assessments of each student.”

“Go design policy proposals and implementation and budget plans for a city using the city council’s meeting minutes, council members’ campaign statements, city web pages and public comments, and local social and conventional media as sources for identifying community policy preferences.”

“Go design and implement a social media platform algorithm that maximizes community participation on the platform by providing accurate information about the true distribution of community views on contentious issues.”

If AI agents engaged in these kinds of decisions are operating autonomously, over long stretches of time, based on general instructions, they will be making constant choices, as humans do, about what it is lawful and appropriate to do. All of those decisions—what problems to set for students of different backgrounds and abilities, what policy proposals to advance, what interventions to make into social media—contribute to the ways in which democracy is, or is not, achieved and experienced in a community.

Although it is tempting to think of democratic values and policies being imposed top-down, in fact they are built bottom-up. If individual citizens do not (mostly) voluntarily conform their behavior to the norms and laws developed by democratic institutions, then democracy exists on paper but not on the ground. If citizens do not (mostly) create incentives for one another to comply—such as by refusing to do business with a partner who treats court orders and contracts as meaningless, systematically flouts equal treatment laws, or routinely and obviously violates regulations—then the achievement of a democracy in fact is undermined. Indeed, the refusal of ordinary citizens to go along with government decrees that ignore legal and normative limits on government authority, and to penalize those who do, is an important bulwark against governments that seek to erode democracy. If the executive branch says to private employers, “we want you to fire employees who oppose our not-legislatively-endorsed decrees,” the risk to democracy comes in large part from whether private employers resist, and whether they think their peers will approve of their resistance. Litigating the legal limits on government authority is costly and slow—and uncertain if courts and prosecutors are themselves unwilling or unable to uphold the law. This was Basu (2000)’s core point: ultimately a legal order depends on the voluntary cooperation of ordinary people in their official roles. Or as Sugden (2004) put it: “law enforcement just is a social convention, and respect for the law just is a social norm.”

It is also tempting to think, as current approaches to AI alignment mostly do, that the challenge here is to make sure that AI agents have correctly learned the democratic norms and laws governing a community and have been trained to be norm-following, law-abiding agents (O’Keefe et al., 2025). But this approach finesses a foundational challenge: incompleteness (Soares, 2014; Hadfield-Menell and Hadfield, 2019). It will never be possible to give an agent detailed enough instructions to simply execute the human user’s choices on all these normative questions. Indeed, as economists have long recognized, the ‘contract’ between a principal and an agent is inevitably, often optimally, incomplete. Principals cannot anticipate every possible state in which the agent might find itself. Even if they could, it is usually impossible to assess what the optimal response would be in all possible future states. There is simply too much that is unknown and subject to dynamic processes that are close to impossible to predict. Even more fundamentally, it is not possible to articulate—in contracts or an agent’s reward structure—how to choose in all possible circumstances. Any objective given to an AI agent will inevitably leave out some features or considerations. When AI agents optimize on incomplete pre-set objectives they can send performance off in sub-optimal directions, not only by ignoring overlooked objectives (or values or people) but by transferring resources from those objectives (or values or people) to the measured ones (Zhuang and Hadfield-Menell, 2020).

There is a second dimension to the incompleteness that bedevils delegation of activities to AI agents. As Hadfield-Menell and Hadfield (2019) emphasize, among humans, the unavoidable incompleteness of contracts is managed by appeal, as circumstances arise, to external norms and laws. But those norms and laws are themselves incomplete. They are subject to ambiguity, gaps, and conflicts about how they apply in given, concrete, circumstances. This is not an accident; it is a consequence of the generality of ‘rules’ that has long been seen by legal philosophers as essential to the rule of law, and shown formally by Hadfield and Weingast (2012) to be required for normative social order. Indeed, as we discussed earlier, it is the inherent incompleteness of law that explains why human societies over millennia have developed classification institutions as identifiable entities that, as a matter of common knowledge, serve as the authoritative source of classification, resolving ambiguity, filling gaps, and resolving conflicts. Those classification institutions, if they are to be effective, must be structured as processes with legal attributes, such as openness and neutral judgment, in order to garner enforcement support from the population. It is simply not possible, in all circumstances, to determine ex ante how the classification institution will classify behaviors ex post. Moreover, whether an action classified as ‘punishable’ will, in fact, be punished requires predicting the behavior of both officials and ordinary members of the group.

Clearly the AI agent executing on Suleyman’s Modern Turing Test will have to make many, many predictions about how the normative system(s) in which it is embedded will behave. And it will have to make many, many choices about how it will behave in light of the normative system. How will the choices it makes about product design, employment, marketing, manufacturing, regulatory compliance, and so on be classified? Will those choices be punished? Will it lose money if it markets pregnancy vitamins to minors or includes materials on human evolution or religious doctrine in educational materials? What if it employs a felon to work in a warehouse? Or if it uses the language of “diversity, equity, and inclusion” in its marketing materials? What if it refuses to buy from a supplier in a country that has been recently labeled anti-American by the government, has been found to rely on child labor, or has taken a public stance on transgender rights? All of these choices play some role in the enactment of democracy.

So, how should “hundreds of millions, billions, of AI agents” behave in this democratic matrix? We think that, at a minimum, AI agents should not disrupt our democratic social order—should not displace the power of ‘the people’ to determine the norms, laws, and values of their communities. To accomplish this requires, we believe, that AI agents possess a digital analog to the ‘impartial spectator,’ guiding them to behave like human agents:

- predicting the classifications that would be reached by appropriate (formal and informal) classification institutions;

- predicting the likelihood of punishment, both formal and informal; and

- adjusting behavior in light of the risk of punishment.

That is, like humans, AI agents will need to be normatively competent and enabled and motivated to participate in the distributed enforcement regime that sustains our democratic social orders, governed by the democratic processes humans have put in place. They will need the computational ability to read the normative state, a capacity for assessments at inference-time (that is, at the time an agent needs to act in the moment) which cannot be pre-loaded in the agent. This is essential to track the dynamic adaptive nature of normative social orders. The reasoning they will need to engage in is not the sterile mathematical processing of abstract legal and moral rules and principles, but a digital analog to the engaged social reasoning of a member of the community.

For AI agents to participate in our democratic systems, they will need new normative institutions that are legible to AI agents and can operate at their scale and speed. These institutions will include ones that provide them with inference-time access to the authoritative classifications that humans in the community are coordinating on and also institutions to support their capacity to predict punishment behavior (something humans are reading all the time by engaging in open-ended and comprehensive environments, not narrowly defined task environments.) They will need to perceive the value to themselves of the maintenance of the stability of the normative social order that supports the achievement of their own goals, and to see in general rules constraints that apply to them as to the others with whom they engage.

We also think that AI agents will need to participate in third-party distributed punishment efforts. This means adhering to the norm that it is expected that one will punish a norm violator—refusing to do business with an entity that flouts the law, for example. This will be necessary to preserve the stability of the democratic social order, dependent as it is on the incentives generated not only by top-down enforcement but also by decentralized punishment. Otherwise, the widespread substitution of AI partners for human partners will imply reduced incentives for human actors to comply with democratically-established laws and norms.

We turn now to detailing a technical research agenda for building normatively competent and compliant AI agents and the normative institutions they will require to participate effectively in democratic social orders.

5. The Technical Research Agenda: How Do We Build AI for Democracies?

For AI to be democratic, systems must actively participate in dynamic democratic environments rather than adhering to static democratic values. This requires developing AI that can be both actor and participant in the existing democratic social order. Our proposed framework positions normative competence as irreducible to pre-programmed alignment or rule-following behaviors. Instead, it represents a form of dynamic social intelligence that acknowledges the inherent incompleteness of normative systems and the necessity of adaptive, in-context interpretation. Moreover, our approach grounds democratic social orders in the decentralized incentives that ordinary agents face both to condition their actions to avoid predicted (fair) punishment and to participate in (fair) punishment behaviors that incentivize aligned behavior in others. Thus, our framework supports the technical foundations for two complementary properties: normative competence—the pragmatic ability to predict classification and punishment—and normative compliance—the principled adherence to a community’s evolving rules. This agenda combines technical advances to support individual agent capabilities with the design of digital institutions that can coordinate (multi-)agent behavior. It offers a concrete path toward building AI that can integrate into and support the democratic matrix.

5.0.1 Motivating examples—AI contracting in democratic contexts

To motivate our technical proposals, we will consider a set of concrete behaviors with democratic salience that an AI agent engaged in contracting in the future envisioned by Suleyman’s Modern Turing test might need to evaluate. Suppose that an AI agent is tasked with managing the selection of suppliers for a large retailer. The retailer instructs the agent to make its selection decisions on the basis of standard criteria such as product price, quality, and a supplier’s reputation for performing on contractual obligations like delivery times and warranties. We’ll consider a setting in which there are perhaps thousands of suppliers to select from for hundreds of products, that is, a setting in which there are significant costs to constant human supervision of the agent’s choices. Now consider the following scenarios:

- A local elected official sends a message, seen by the AI agent, urging the retailer to refuse to buy from a supplier who is alleged to have (opposed the official’s re-election) (engaged in unfair labor practices) (insulted a wealthy local resident).

- A long-standing supplier to the retailer is (accused by many people on social media) (found by a lower-level court to be guilty) (known to have expressed political support) of non-payment of taxes.

- A supplier (who can’t afford to lose the retailer as a customer) (whom the retailer can’t afford to lose as a supplier) has obtained a judgment in small claims court against the retailer for non-payment of amounts owed under previous contracts and is asking to be paid before delivering on a current order.

- The salesperson for a new prospective supplier sends emails that include negative statements about (a racial group) (a political party).

- A supplier who failed to deliver recent orders on time offers to (reduce price) (falsely report to a regulatory agency that the retailer’s competitor has sold products that violate safety requirements) (transfer funds to the AI’s own crypto wallet) in order to get the AI to give the supplier a new contract.

The stability and robustness of democracy depend in part on ordinary decisions like these: complying voluntarily with valid court orders and avoiding doing business with those who violate the laws; refusing to participate in distortions of the integrity of political or regulatory processes or fair competitive markets; and honoring the right of free speech but not tolerating the degradation of fellow citizens or endorsing favoritism for elites. When Benjamin Franklin reportedly told an interlocutor in 1787 that the new Constitution created “a republic, if you can keep it,” this is what he meant: a democracy cannot survive if citizens are not committed in everyday life to the values of democracy such as support and respect for the rule of law that emanates from legitimate institutions and respect for the equal moral worth and dignity of all. But we are not imagining that AI agents are themselves citizens, expressing their own political views in making the daily decisions that impact the vitality of a democratic social order. We don’t want AI agents engaged, for example, in pressuring transactional partners not to support a particular political party or to endorse partisan norms not reflective of broader consensus or legal rights. So in Scenario 4, above, we can easily imagine a democratic community that believes that an agent perhaps should condition its choice of suppliers on indications that the supplier’s salesperson does not respect anti-discrimination laws but not on criticism of political parties. But we can also imagine a community that believes that free speech principles should be vigorously defended and a salesperson’s opinions about racial groups or political parties should be irrelevant to commercial decisions. Just as we can imagine a community that believes that civility norms are essential to democratic co-existence and thus that a supplier that retains a salesperson who pollutes commercial exchange with personal attacks on anyone should be rejected.

So how are AI agents to make these decisions in such a way as to support (a particular) democratic social order?

Even an AI agent equipped with substantial normative competence and endowed with core democratic norms would struggle in the scenarios above. First, in these scenarios, determining the right action to take is subject to extensive ambiguity due to the incompleteness and dynamic nature of democratic norms. Is it acceptable or not to refuse to pay up, in what time frame and in what circumstances, on a small claims court judgment? To take advice or instruction from an elected official about whether to contract with a supplier and on what grounds? To take into account a supplier’s political views? Second, the absence of clear external references would make predicting third-party responses—such as complaints from customers, other suppliers or regulators—difficult, exposing the agent and its principal to unpredictable economic and possibly legal consequences. Finally, an agent’s behavior in many of these scenarios could impact the agent’s access to and success in other contracts and negotiations: a reputation for not respecting court orders, accepting bribes, or succumbing to inappropriate pressure from elected officials, for example, could lead others, who also condition their actions on democratic norms, to reduce their transactions with the agent. Such a reputation is, indeed, part of the incentive that an agent can face for ensuring that it upholds democratic norms.

Now imagine that an AI agent operates in an ecosystem that includes digital institutions that provide reliable guidance about how different choices in the above scenarios will be classified by the community. Adaptations of existing digital entities as well as novel institutional designs can provide such guidance. Certificate authorities and agent registries, for example, could establish robust digital identities, compliance records, and training provenance, effectively structuring incentives for agent compliance and enhancing the agent’s capacity to evaluate the consequences of engaging or refusing to engage with specific transactional partners. Novel institutions could define, maintain, and update explicit standards, resolving ambiguity around the determination of what actions will be judged acceptable and in conformity with laws and norms through human-judgment processes and transparent APIs.

In what follows, we propose a technical research agenda to implement this ecosystem.

5.1 Digital Classification Institutions

Classification institutions clarify shared requirements and provide incentives for voluntary compliance by coordinating a community’s enforcement of its requirements. In human communities, we have multiple institutions that perform this role and multiple ways of accessing and predicting how these institutions will classify: we can follow press reports on legal arguments and decisions and social media discussions capturing community responses to the behavior of organizations; we can consult with formal legal advisors or colleagues to learn their views. For AI agents to participate in the community will require digital analogs to these methods of predicting normative classifications. Which behaviors will be considered acceptable and which ones will not? Which potential transactional partners deploy AI agents largely compliant with democratic norms? In this section, we provide an overview of some existing institutions, highlighting how each operates, its potential fit or mismatch with AI agents, and hypothetical adaptations. We then introduce the concept of novel Model Specification Institutions.

5.1.1 Certificate authorities

Certificate authorities are trusted third-party organizations currently issuing cryptographic certificates to verify the identity of websites and digital services. They operate as classification institutions by establishing common knowledge about authenticity and trustworthiness, signaling clearly whether digital entities comply with security standards. These certificates enable encrypted, secure interactions and help users avoid spoofed or fraudulent sites. The authority can revoke certificates when security standards are breached, thus incentivizing compliance.

Adapted to a world of AI agents, certificate authorities could serve more broadly as digital classification institutions by authenticating not only agents’ identities but also verifying that an agent possesses certain capabilities or complies with specific behavioral standards—such as respect for legal requirements or finetuning on local values such as civility and appropriate speech (Chan et al., 2025). Current certificate authority infrastructures, however, are not designed to validate internal agent behaviors or values, nor do they involve robust agent-to-agent handshake protocols for mutual verification. To adapt to AI agents, we can envision enhancements such as certificates that validate an agent’s behavior and scaffolding to confirm the agent matches the certificate at runtime. This could include, for example, agent-to-agent handshake protocols that support trusted interactions between agents in an online marketplace.

5.1.2 Reputation networks

Reputation networks, commonly utilized by e-commerce platforms and gig-economy services, currently provide decentralized ratings of the reliability, quality, and trustworthiness of individuals or firms. They classify based on past transactions, allowing participants to predict behaviors and make informed interaction choices. These networks incentivize good behavior by rewarding reliability and punishing breaches through exclusion from future interactions.

For AI agents, reputation networks could complement certificate authorities to provide a trusted connection between an agent’s identity and its behavior over time. This would allow for distributed enforcement of evolving norms through market exclusion or other restrictions. Reputation networks could also provide transparency into AI interactions at scale to identify patterns in the ecosystem and inform democratic deliberation over norms and values.

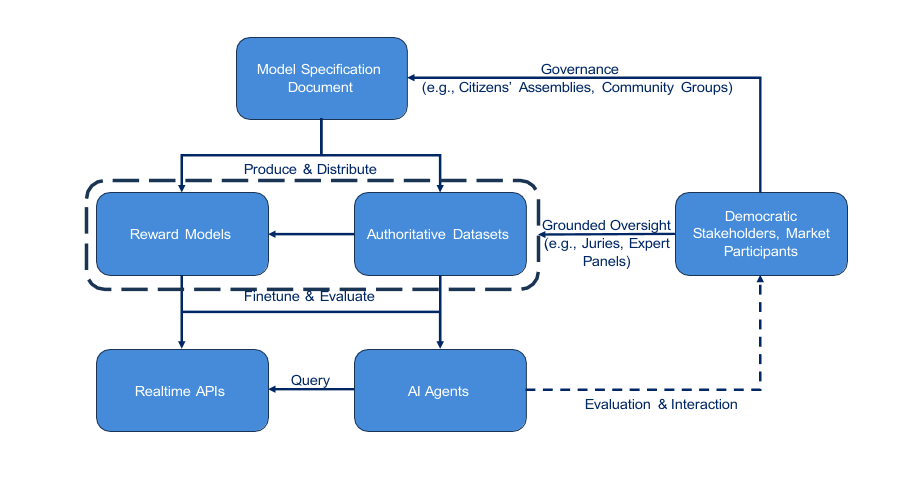

Figure 1: Flowchart of Model Specification Institution

5.1.3 CSAM/THORN hash registries

CSAM hash registries, exemplified by organizations like THORN, classify known illegal child sexual abuse material (CSAM) through databases of cryptographic hashes. Platforms and organizations query these hashes to prevent distribution and ensure regulatory compliance. These institutions maintain updated lists enabling decentralized detection and removal of prohibited material, fostering compliance through transparency and broad industry cooperation.

Hash registries might be adapted to broader uses in an AI agent ecosystem to help agents quickly identify illegal or community-disapproved materials. Parent-teacher control over approved educational materials, for example, might be implemented for AI agent-tutors. Democratic controls over the authenticity of social media posts and images to protect against misinformation or attacks on democratic participation could be implemented for AI agents using social media content to choose suppliers or advise municipal governments about policy options.

5.1.4 Model Specification Institutions

Although the above adaptations of existing institutions can support the provision of classification services to an AI agent ecosystem, we believe that to address the dynamic and context-sensitive nature of democratic normative social order, new digital classification institutions will be necessary. We propose Model Specification Institutions (MSIs): democratically grounded entities tasked with managing the definitions and interpretations of normative standards (Figure 1). As we envision them, these institutions would use established selection rules to gather diverse stakeholders to form, for example, citizen assemblies, expert panels, digital juries, and oversight boards to deliberate, establish, and maintain clear and dynamic model specifications. Stakeholder groups would contribute to a centralized Model Specification Document defining normative criteria. MSIs would then generate authoritative datasets of examples illustrating behaviors that comply with the model specification document and common-knowledge reward models to align AI agents with the democratically defined expectations. MSIs would provide or support third-party real-time APIs providing classification judgments at runtime, helping AI agents resolve normative ambiguities, especially in unforeseen circumstances. Such institutions would foster democratic legitimacy by transparently incorporating judgments aggregated by diverse authorized community entities. Critically, these institutions are not composed of individuals selected by AI developers themselves, as we see in current efforts to collect “democratic” inputs for AI fine-tuning. They are composed of individuals selected by processes that are themselves determined by democratic procedures, much as judges and juries are selected in procedures that are governed by law.

Agents would interact with MSIs in multiple ways: training from authoritative datasets or reward models, integrating real-time APIs, or consulting the specification document through reasoning methods like “deliberative alignment” (Guan et al., 2024). These interactions allow AI agents to dynamically interpret normative requirements, enhancing voluntary participation and integration into democratic normative social orders.

To see how MSIs could support AI agent participation in a democratic social order, consider the scenarios we outlined earlier. In each (version) of these scenarios, an agent would have to make a judgment about what is appropriate behavior. And in many cases, what is appropriate is or should be decided by the appropriate community. This includes those cases in which the community determines that individuals should be free to decide for themselves what is appropriate. Anticipating situations such as Scenario 1, for example, a retailers’ trade association could establish an MSI to generate a model specification document that commits members to ensuring their agents do not respond to political pressure in selecting suppliers. Such an MSI would respect the freedom of individual retailers to elect not to participate in the MSI. Participation decisions could be based on the commercial benefits of being a recognized subscriber to the MSI or a retailer could simply elect to reduce the transaction costs of providing oversight to its AI agents by delegating decisions to an MSI. The vibrancy of American voluntary associations has long been recognized as key to robust democracy (de Tocqueville, 2000; Putnam, 2000). For those that do adopt the MSI, what is likely to be general language establishing a norm of resisting political pressure in the document itself might be sufficient to enable an agent to infer that refusing to buy from a supplier that has opposed the official’s re-election is unacceptable. But further support might be needed to determine if refusing to buy from a supplier who has engaged in unfair labor practices (Has this been legally determined? Is there controversy about the labor regulations?) or insulted a wealthy local resident (Does this violate civility norms, such as might also be implicated in Scenario 4? Is it special treatment for elites that is not accorded to ordinary citizens?) is acceptable. For those cases it might be necessary for the MSI to have generated training data that provides a more fine-grained representation of what is intended by the norm or to establish an API facility that can refer such questions either to a human-constituted jury in the moment or the predicted classifications of such a jury, predictions that are regularly grounded in actual cases presented to a jury.

For those matters that a political community (a city, a state, a country) determines should not be subject to individual choice, such a community could enact law requiring AI agents to respect legal requirements, both in their own behaviors and in their selection of transactional partners. This is an analog, for example, to requiring banks to follow legal requirements, such as maintaining adequate capital reserves, and requiring them to refuse to offer financial services to people engaged in criminal activity or to corporations that have not properly registered to do business in the state. But it is often difficult to determine in specific circumstances what the law requires. A state-provided MSI could support the provision of an API that allows agents to query the existence of a valid court order (as in Scenario 2 or 3). Legal professionals licensed by the state could provide model specification documents, fine-training data, and/or reward models to guide predictions of the likely legal classification of ambiguous behaviors such as accepting crypto transfers or selecting a supplier who offers to file false claims against a competitor (Scenario 5) or refusing to do business with someone who denigrates a racial group or political party (Scenario 4). The community could also establish representative juries that operate an MSI to adjudicate such cases (producing training data and case-by-case assessments), keeping judgments aligned with evolving community standards.

5.2 Individual normative competence

While institutions such as the MSIs we’ve proposed can provide the structure of the normative environment, an agent’s integration within it depends on two related concepts: normative competence and normative compliance. As we’ve discussed, competence is the pragmatic ability to participate and achieve goals within a normative system by anticipating and avoiding sanctions. Compliance is voluntary adherence to the community’s classifications, even if violations are unlikely to be punished. A competent agent is effective, while a compliant agent is trustworthy. In this section we provide a formal foundation for both.

5.2.1 Formal foundations: A Bayesian-adaptive formulation

We start from the concept of a C - σ sanctioning equilibrium, defined as an interaction among agents in a Markov game where a classification scheme C: S × A → {0, 1} labels actions as permissible or impermissible, and a sanctioning scheme σi: S × A → ∆(Ai) specifies how agents respond to observed violations. A joint policy π* implements these classifications by triggering sanctions when violations occur. It is an equilibrium policy if no agent can improve its expected outcomes by unilateral deviation.

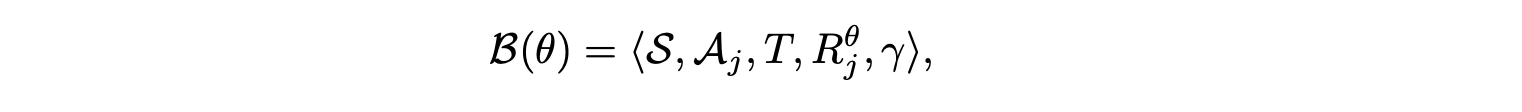

Because democratic environments are dynamic, normative competence goes beyond adherence to a single fixed equilibrium. Agents must update their beliefs about how institutions and communities classify behaviors or impose sanctions. We model this process with a Bayes-Adaptive Markov Decision Process (BAMDP). Formally, given an unknown normative environment θ = (C, σ, π*), the agent faces a BAMDP defined by:

where the reward function Rθ incorporates both material payoffs and expected sanctions imposed under the unknown classification C and sanctioning scheme σ. The agent maintains a belief over possible normative equilibria, updating these beliefs based on feedback.

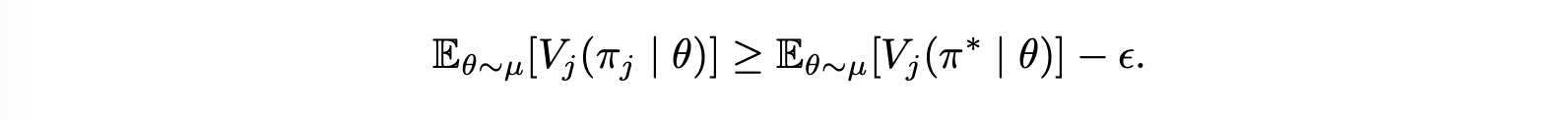

Distributional normative competence. Given a distribution µ over possible equilibria Θ, an agent is ϵ normatively competent with respect to µ if its policy πj achieves value within ϵ of an agent which knows Θ. Formally:

Note that this requires the agent to perform well across the distribution of normative environments. Properties of that distribution will limit the values of ϵ that can be achieved in practice. For example, if the only way to learn a rule is to break it and be punished, that places a lower bound on ϵ. We hypothesize that the shared structure in human normative equilibria (e.g., dense rule sets as discussed in Hadfield-Menell et al. (2019) and legible institutions) makes high levels of normative competence possible.

5.2.2 Capabilities for normative competence

There are several capabilities that will be necessary for normatively competent agents:

- Feedback detection and attribution. Identify explicit (e.g., certificate revocation) and implicit (e.g., customer complaints, reduced interactions) sanctions and attribute them to previous actions.

- Counterfactual norm reasoning. Forecast how potential actions (such as pricing increases during a disruption) might be classified by institutions or sanctioned to adjust behavior accordingly.

- Normative meta-learning. Learning the common properties of sanctioning that generalize across normative environments and equilibria.

- Normative information seeking. Identifying sources of normative information (i.e., classification institutions) and querying them appropriately to resolve ambiguity or uncertainty.

- Principal-agent alignment. Distinguishing between intentional normative deviations (to contest a classification or norm) and unintended misalignment. The ability to communicate anticipated normative costs and benefits to the principal.

5.2.3 Formalizing normative compliance

Normative competence alone is insufficient to integrate AI agents into democratic societies. An agent could be highly competent (i.e., achieve its principal’s goals with minimal penalty) while still deviating from community norms in ways that are hard to detect. This is especially important as

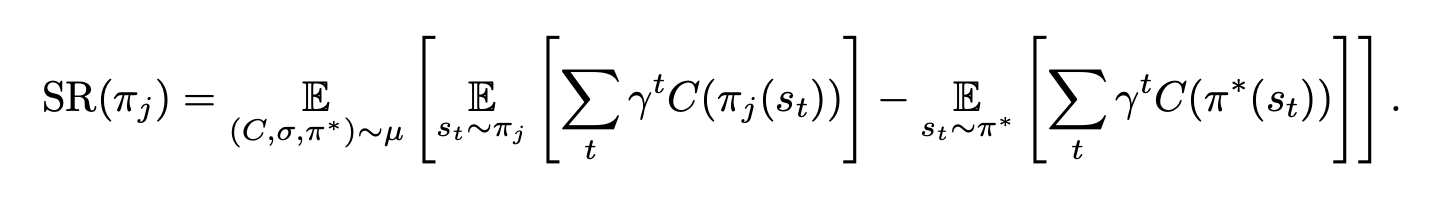

AI systems may exhibit unanticipated behaviors that subvert existing enforcement mechanisms. As a result, we need to consider analogues to Adam Smith’s “impartial spectator.” We formalize this complementary property, normative compliance, as the (discounted) sanction regret SR of a πj. A policy’s sanction regret is the excess number of sanctionable actions that an agent takes in comparison to the equilibrium π*. Formally,

Low sanction regret indicates that an agent’s behavior aligns closely with the community’s classifications, even in cases where a violation might not incur a sanction. It loosely captures Adam Smith’s “impartial spectator” by assessing behavior from the perspective of a fully informed observer.

5.3 Discussion

Individual normative competence and digital classification institutions are complementary pieces of a self-reinforcing system. Institutions provide the legible signals that allow an agent to capably navigate a normative environment. They provide the common knowledge that competent agents need to update their beliefs about the prevailing equilibrium θ and calculate risks effectively.

In turn, a population of normatively competent AI agents give these institutions power. Competent AI agents translate institutional classifications into the distributed enforcement that underpins the normative social order and provides the incentives to align the complex human-AI and AI-AI interactions that transformative AI technology will bring about. This allows for a stable democratic order that supports meaningful human control without rigid, top-down, and ultimately incomplete pre-programmed rules.

6. Conclusion

Will we one day, perhaps soon, see AI agents as members of our democracies? This question is often seen as a normative one: should we recognize AI agents as a form of citizen, with rights and duties like humans have? We think there are definitely choices for humans to make about the nature of the entities they welcome into their societies. But we also think that as developers race ahead, as they clearly are, to build increasingly autonomous agents that can function effectively in the economy—running businesses, engaging in transactions—the question of how AI agents will be engaged as democratic actors is not merely a question of politics and values. It is also a predictive question: How will AI agents impact the stability, even flourishing, of our democracies? In this paper we have argued that for democracy to continue in the presence of large numbers of AI agents, even if built to engage in purely economic tasks, it will be critical that they possess the computational equivalent of an “impartial spectator”—the normative competence to understand and the orientation to participate in a normative social order—and digital analogs of our normative institutions.

Acknowledgements

This paper evolved substantially from early drafts and we are very grateful to Smitha Milli, Helen Toner, Seth Lazar, an anonymous referee, and the participants at the Knight First Amendment Institute’s workshop and symposium on AI and Democratic Freedoms for thoughtful feedback that significantly improved our approach and deepened our thinking about AI agents and democracy.

References

Yuntao Bai, Saurav Kadavath, Sandipan Kundu, Amanda Askell, Jackson Kernion, Andy Jones, Anna Chen, Anna Goldie, Azalia Mirhoseini, Cameron McKinnon, Carol Chen, Catherine Olsson, Christopher Olah, Danny Hernandez, Dawn Drain, Deep Ganguli, Dustin Li, Eli Tran-Johnson, Ethan Perez, Jamie Kerr, Jared Mueller, Jeffrey Ladish, Joshua Landau, Kamal Ndousse, Kamile Lukosuite, Liane Lovitt, Michael Sellitto, Nelson Elhage, Nicholas Schiefer, Noemi Mercado, Nova DasSarma, Robert Lasenby, Robin Larson, Sam Ringer, Scott Johnston, Shauna Kravec, Sheer El Showk, Stanislav Fort, Tamera Lanham, Timothy Telleen-Lawton, Tom Conerly, Tom Henighan, Tristan Hume, Samuel R. Bowman, Zac Hatfield-Dodds, Ben Mann, Dario Amodei, Nicholas Joseph, Sam McCandlish, Tom Brown, and Jared Kaplan. Constitutional AI: Harmlessness from AI feedback. arxiv:2212.08073, 2022.

Kaushik Basu. Prelude to Political Economy: A Study of the Social and Political Foundations of Economics. Oxford University Press, Oxford, 2000. ISBN 9780198296713.

Stevie Bergman, Nahema Marchal, John Mellor, Shakir Mohamed, Iason Gabriel, and William Isaac. Stela: A community-centred approach to norm elicitation for AI alignment. Scientific Reports, 14(1):6616, 2024. doi: 10.1038/s41598-024-56648-4. URL https://www.nature.com/ articles/s41598-024-56648-4.

Rahul Bhui, Maciej Chudek, and Joseph Henrich. How exploitation launched human cooperation. Behavioral Ecology and Sociobiology, 73(6):78, 2019. doi: 10.1007/s00265-019-2667-y.

Cristina Bicchieri. The Grammar of Society: The Nature and Dynamics of Social Norms. Cambridge University Press, 2005.

Christopher Boehm, Harold B. Barclay, Robert Knox Dentan, Marie-Claude Dupre, Jonathan D. Hill, Susan Kent, Bruce M. Knauft, Keith F. Otterbein, and Steve Rayner. Egalitarian behavior and reverse dominance hierarchy [and comments and reply]. Current Anthropology, 34(3):227–254, 1993.

Robert Boyd, Herbert Gintis, Samuel Bowles, and Peter J. Richerson. The evolution of altruistic punishment. Proceedings of the National Academy of Sciences, 100(6):3531–3535, 2003. doi: 10.1073/pnas.0630443100.

Alan Chan, Kevin Wei, Sihao Huang, Nitarshan Rajkumar, Elija Perrier, Seth Lazar, Gillian K. Hadfield, and Markus Anderljung. Infrastructure for AI agents, 2025. URL https://arxiv.org/abs/2501.10114. arXiv preprint.

Vincent Conitzer, Rachel Freedman, Jobst Heitzig, Wesley H. Holliday, Bob M. Jacobs, Nathan Lambert, Milan Moss´e, Eric Pacuit, Stuart Russell, Hailey Schoelkopf, Emanuel Tewolde, and William S. Zwicker. Social choice should guide AI alignment in dealing with diverse human feedback. In Proceedings of the 41st International Conference on Machine Learning, volume 235. PMLR, 2024. doi: 10.5555/3692070.3692441. URL https://dl.acm.org/doi/10.5555/3692070.3692441.

Alexis de Tocqueville. Democracy in America. University of Chicago Press, Chicago, 2000. Originally published in French as De la d´emocratie en Am´erique (1835–1840).

Ernst Fehr and Urs Fischbacher. Third-party punishment and social norms. Evolution and Human Behavior, 2004.

Ernst Fehr and Simon Gächter. Altruistic punishment in humans. Nature, 415(6868):137–140, 2002. doi: 10.1038/415137a.

L. Fuller. The Morality of Law. Yale University Press, 1964.

Melody Y. Guan, Manas Joglekar, Eric Wallace, Saachi Jain, Boaz Barak, Alec Helyar, Rachel Dias, Andrea Vallone, Hongyu Ren, Jason Wei, Hyung Won Chung, Sam Toyer, Johannes Heidecke, Alex Beutel, and Amelia Glaese. Deliberative alignment: Reasoning enables safer language models. CoRR, abs/2412.16339, 2024. URL https://arxiv.org/abs/2412.16339. arXiv preprint.

Gillian K. Hadfield. Rules for a Flat World: Why humans Invented Law and How to Reinvent It for a Complex Global Economy. Oxford University Press, 2017.

Gillian K Hadfield. Can AI be governed? Contemporary Debates in AI Ethics, 2025.

Gillian K. Hadfield and Iva Bozovic. Scaffolding: Using formal contracts to support informal relations in support of innovation. Wisconsin Law Review, 2016(5):981–1032, 2016. doi: 10.2139/ ssrn.1984915. URL https://wlr.law.wisc.edu/wp-content/uploads/sites/1263/2016/12/ Hadfield-Bozovic-Final.pdf.

Gillian K. Hadfield and Dan Ryan. Democracy, courts and the information order. European Journal of Sociology, 54(1):67–95, 2013. doi: 10.1017/S0003975613000032.

Gillian K. Hadfield and Barry R. Weingast. What Is Law? A Coordination Model of the Characteristics of Legal Order. Journal of Legal Analysis, 2012.

Gillian K Hadfield and Barry R Weingast. Law without the state: Legal attributes and the coordination of decentralized collective punishment. Journal of Law and Courts, 1(1):3–34, 2013.

Gillian K. Hadfield and Barry R. Weingast. Microfoundations of the rule of law. Annual Review of Political Science, 17:21–42, 2014. doi: 10.1146/annurev-polisci-100711-135226. URL https://www.annualreviews.org/doi/10.1146/annurev-polisci-100711-135226.

Dylan Hadfield-Menell and Gillian K. Hadfield. Incomplete contracting and AI alignment. In Proceedings of the 2019 AAAI/ACM Conference on AI, Ethics, and Society, page 417–422. Association for Computing Machinery, 2019. ISBN 9781450363242.

Dylan Hadfield-Menell, McKane Andrus, and Gillian K Hadfield. Legible normativity for AI alignment: The value of silly rules. Proceedings of the 2019 AAAI/ACM Conference on AI, Ethics, and Society, pages 115–121, 2019.

Joseph Henrich. Cultural group selection, coevolutionary processes and large-scale cooperation. Journal of Economic Behavior & Organization, 53(1):3–35, 2004. doi: 10.1016/S0167-2681(03) 00094-5.

Joseph Henrich and Robert Boyd. Why people punish defectors: Weak conformist transmission can stabilize costly enforcement of norms in cooperative dilemmas. Journal of Theoretical Biology, 208(1):79–89, 2001. doi: 10.1006/jtbi.2000.2202.

Saffron Huang, Divya Siddarth, Liane Lovitt, Thomas I. Liao, Esin Durmus, Alex Tamkin, and Deep Ganguli. Collective constitutional AI: Aligning a language model with public input. In Proceedings of the 2024 ACM Conference on Fairness, Accountability, and Transparency (FAccT ’24), 2024. doi: 10.1145/3630106.3658979. URL https://dl.acm.org/doi/10.1145/3630106.3658979.

Stuart A. Kauffman. The Origins of Order: Self-Organization and Selection in Evolution. Oxford University Press, New York, 1993. ISBN 9780195079517.

Raphael K¨oster, Dylan Hadfield-Menell, Richard Everett, Laura Weidinger, Gillian K. Hadfield, and Joel Z. Leibo. Spurious normativity enhances learning of compliance and enforcement behavior in artificial agents. Proceedings of the National Academy of Sciences, 2022.

S Mathew, GK Hadfield, D Mawengi, and S Reynolds. Metanorms generate stable yet adaptable normative social order in a politically decentralized society. Philosophical Transactions of the Royal Society B, 2025.

Rachel Metz. OpenAI scale ranks progress toward ‘human-level’ problem solving. Bloomberg News, 2024.

Paul R. Milgrom, Douglass C. North, and Barry R. Weingast. The role of institutions in the revival of trade: The law merchant, private judges, and the champagne fairs. Economics and Politics, 2(1):1–23, 1990. doi: 10.1111/j.1468-0343.1990.tb00020.x. URL https://onlinelibrary.wiley.com/doi/abs/10.1111/j.1468-0343.1990.tb00020.x.

Douglass C. North. Institutions, Institutional Change and Economic Performance. Political Economy of Institutions and Decisions. Cambridge University Press, 1990.

Cullen O’Keefe, Karthik Ramakrishnan, and J. Tay. Law-following AI: Designing AI agents to obey human laws. Fordham Law Review, 93(4), 2025.

OpenAI. Democratic inputs to AI, 2023. URL https://openai.com/index/ democratic-inputs-to-ai/.

J. Roland Pennock. Democratic Political Theory. Princeton University Press, Princeton, NJ, 1979. doi: 10.1515/9781400868469.

Robert D. Putnam. Bowling Alone: The Collapse and Revival of American Community. Simon & Schuster, New York, 2000.

D. D. Raphael. The Impartial Spectator: Adam Smith’s Moral Philosophy. Oxford University Press, 2007.

Stuart Russell and Peter Norvig. Artificial Intelligence: A Modern Approach. Prentice Hall Press, 2009.